- cross-posted to:

- technology@lemmy.world

- cross-posted to:

- technology@lemmy.world

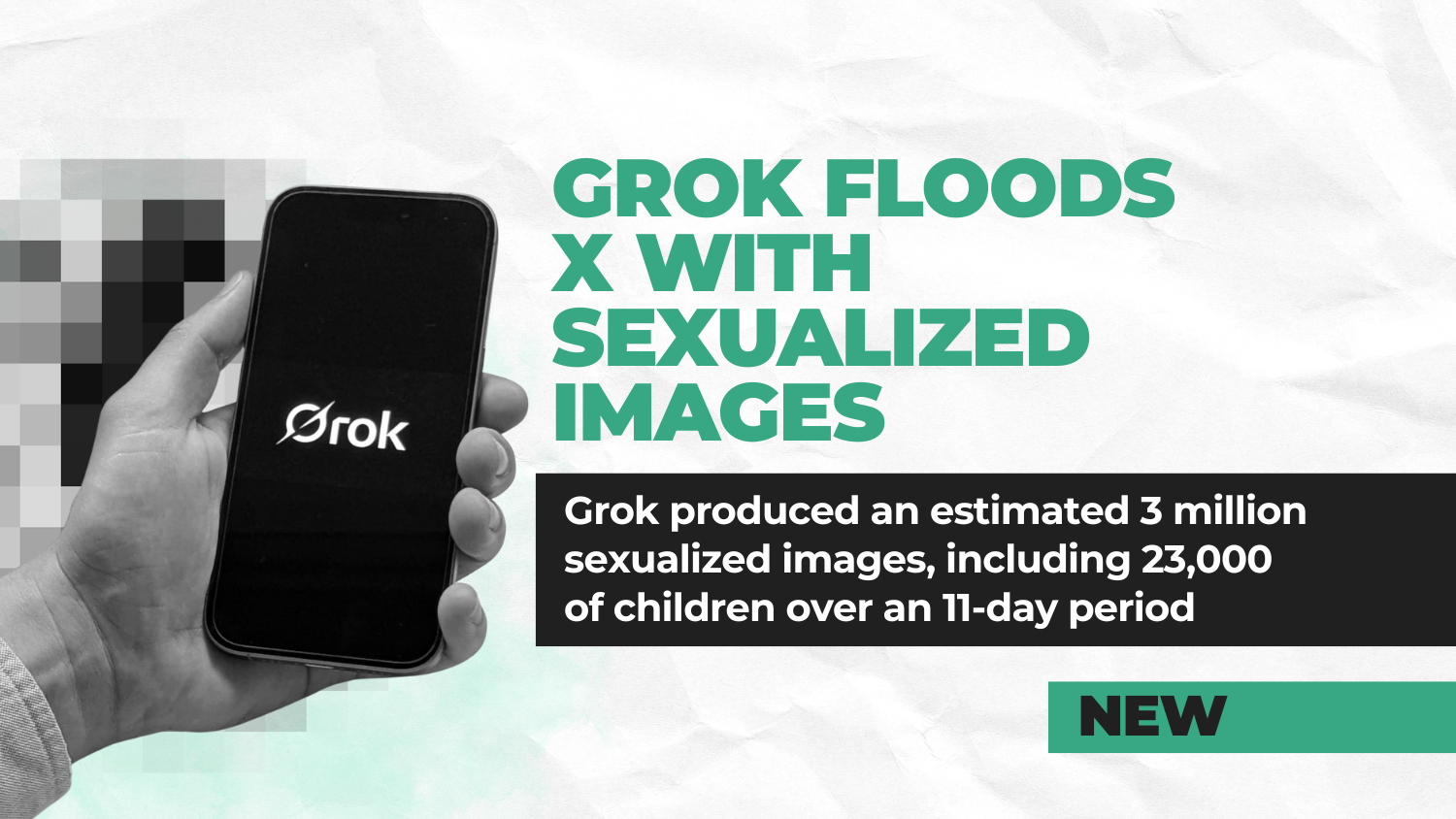

The AI tool Grok is estimated to have generated approximately 3 million sexualized images, including 23,000 that appear to depict children, after the launch of a new image editing feature powered by the tool on X, according to new analysis of a sample of images.[1]

The image-generating feature exploded in popularity on December 29th, shortly after Elon Musk announced a feature enabling X users to use Grok to edit images posted to the platform with one click.[2] The feature was restricted to paid users on January 9th in response to widespread condemnation of its use for generating sexualized images, with further technical restrictions on editing people to undress them added on January 14th.[3]

Grok has no agency. Elon played a heavy role in the design of the tool, promotes and profits off its use, and has failed to stop users from producing this material with what can only be considered as a feature of his software

You‘re probably giving the guy too much credit. I think they use a version of FLUX. Replacing stuff is one of it‘s core features.

Although he might‘ve ordered to add NSFW related concepts because that‘s definitely what he uses it for almost exclusively.